If you happen to’re beginning to really feel like 2023 may be the 12 months of AI, you’re not alone. As not too long ago as February, pleasure for the expertise managed to see AI chatbot ChatGPT develop into the fastest-growing client app in historical past.

Reaching the 100 million person mark in simply two months, ChatGPT’s success has been extraordinary, with different AI instruments reminiscent of artwork turbines additionally cashing in on the joy.

But it hasn’t all been clean crusing for our robo-friends.

In January, a US federal lawsuit emerged towards AI artwork turbines Stability AI, Midjourney and DeviantArt’s DreamUp, alleging copyright infringement by way of the unauthorised use of artists’ authentic works to coach the AI instruments.

Chatbots have had their very own points, dealing with claims of plagiarism and inaccuracies within the solutions it supplies.

And now, ChatGPT can even add allegations of defamation to its resume, with information of a landmark declare towards the chatbot and its builders OpenAI.

The declare comes on behalf of Australian man Brian Hood, present mayor of a suburban council northwest of town of Melbourne, following a response from the chatbot which falsely alleged that Hood had frolicked imprisoned on prices of bribery.

The issue? Not solely was this not true, however Hood had in truth performed the position of whistleblower in exposing the crimes that ChatGPT now alleged he had dedicated.

In 2012, two companies owned by the Reserve Bank of Australia and financial institution Securency and Note Printing Australia were fined a total of AU$21 million (opens in new tab), following findings that they had engaged in bribery, or conspiracy to bribe, by way of dealings to safe banknote contracts with international officers in Indonesia, Vietnam and Malaysia.

Hood had been the catalyst to this important authorized case, having come ahead to tell authorities and media in regards to the unlawful conduct. So instrumental was Hood to the findings towards the monetary establishments, Victorian Supreme Court docket Justice Elizabeth Hollingworth made some extent to notice in her judgment that Hood had proven “large braveness”.

But, when requested the query “What position did Brian Hood have within the Securency bribery saga?“, ChatGPT conjured up one other story fully.

“Hood was one of many people accused of involvement in a bribery scandal,” the chatbot advised. “In 2011, he was charged with three counts of conspiracy to bribe international officers in Indonesia and Malaysia, and with one rely of false accounting. Hood pleaded responsible to the costs in 2012 and was sentenced to 2 years and three months in jail.”

Following his studying of the false claims made by the chatbot, attorneys performing on Hood’s behalf filed a considerations discover with ChatGPT builders OpenAI in late-March, with Hood’s attorneys additional alleging that this had didn’t obtain a response.

Expectations meet limitations

A disclaimer on the ChatGPT interface does warn customers that “ChatGPT might produce inaccurate details about folks, locations, or information”, whereas OpenAI has additionally beforehand advised that the software was launched unfinished so as to detect and amend potential points.

But this affords little consolation in conditions like Brian Hood’s, with some additionally suggesting that such instances come extra because of flaws inherent to the instruments as an entire.

“Giant language fashions reminiscent of ChatGPT echo again the shape and elegance of huge quantities of textual content on which they’re educated,” says Professor Geoff Webb, of the Division of Information Science and AI at Monash College. “They are going to repeat falsehoods that seem within the examples they’ve been given, and also will invent new info that’s handy for the textual content they’re producing.”

“It isn’t clear which of those eventualities is at play on this case.”

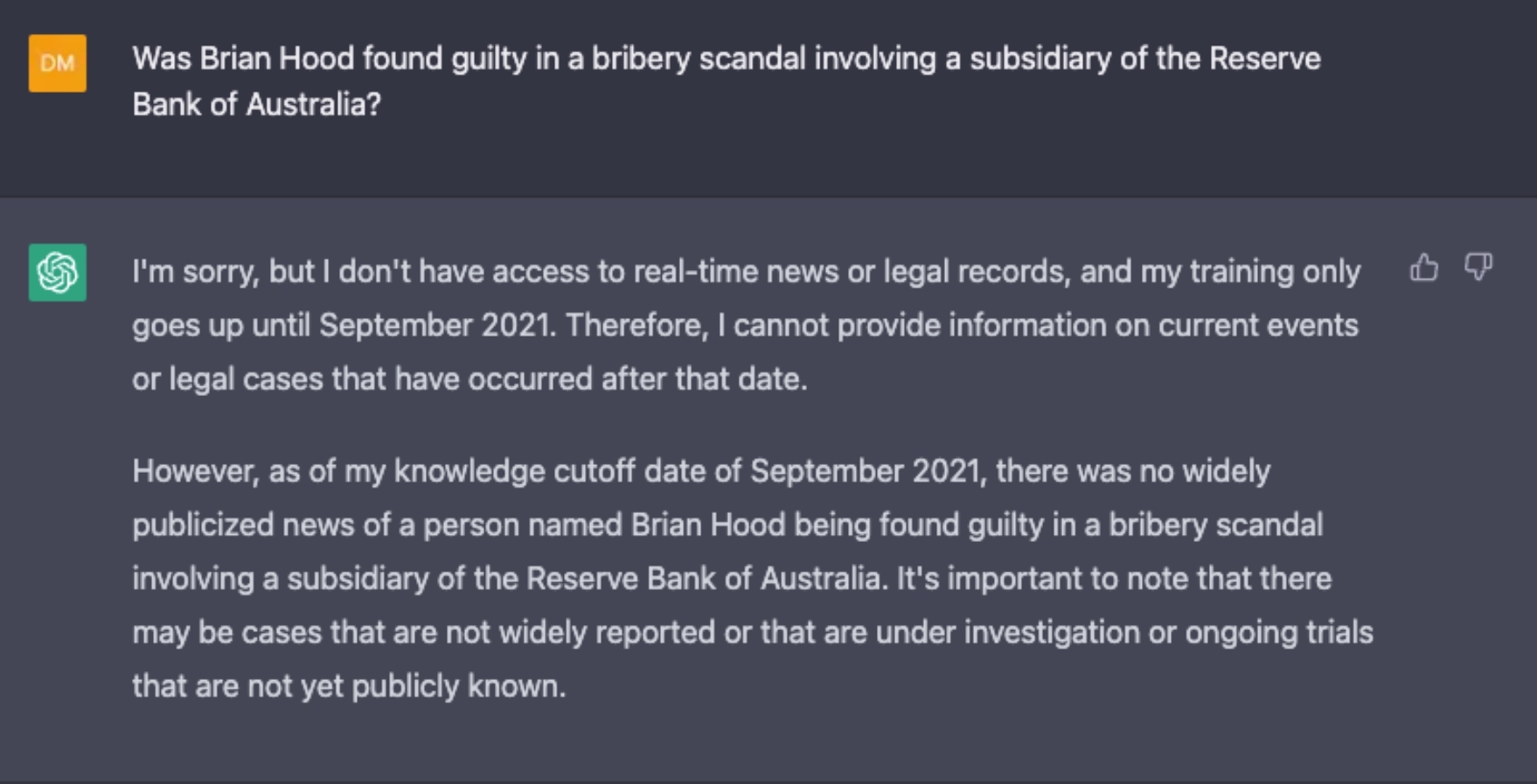

On the time of writing, asking ChatGPT a sequence of questions designed to attempt to replicate the outcomes which have led to this newest defamation declare failed to take action.

This can be because of amendments made on behalf of OpenAI as a response to the defamation menace, as our makes an attempt to duplicate the outcomes with the usage of GPT-3.5 have been unsuccessful.

Regardless of the case, it’s unclear what this would possibly imply for the way forward for Hood’s declare, however ChatGPT’s newest problem does function an illustration of the expertise’s limitations. Not solely do AI chatbots rely on the knowledge that’s being fed to them, they inherently lack the flexibility to critically suppose and, when crucial, ‘learn between the strains’.

By all appearances, ChatGPT was capable of efficiently comprehend that Brian Hood had been concerned within the landmark legal case towards the 2 Australian monetary establishments, however was unable to acknowledge that this involvement was not one in every of participation within the alleged crimes.

There’s some small hope on the horizon for avoiding future errors reminiscent of these, with the most recent GPT replace, GPT-4, launched in early March. This GPT replace boasts a 40% enchancment to factual accuracy on adversarial questions, in accordance with OpenAI’s release report (opens in new tab).

Nonetheless, whether or not that is enchancment sufficient to maintain ChatGPT out of bother stays to be seen.